We might finally know how to use quantum computers to boost AI

Quantum computing has spent years stuck in a familiar purgatory. Too abstract for engineers who demand benchmarks. Too hardware-bound for theorists who want clean proofs. Meanwhile AI kept burning electricity, turning GPU clusters into industrial furnaces. The central objection to quantum machine learning never sounded mystical. It sounded logistical. Machine learning runs on data, and data has bulk. Getting ordinary data into a quantum computer seemed so costly that any advantage died during loading. A recent mathematical analysis argues that this bottleneck may rank as a design mistake. Feed the quantum machine in smaller batches, keep the computation moving, and stop insisting on an impossibly large quantum memory that holds the entire dataset in one pristine superposition.

The real fight sits in memory

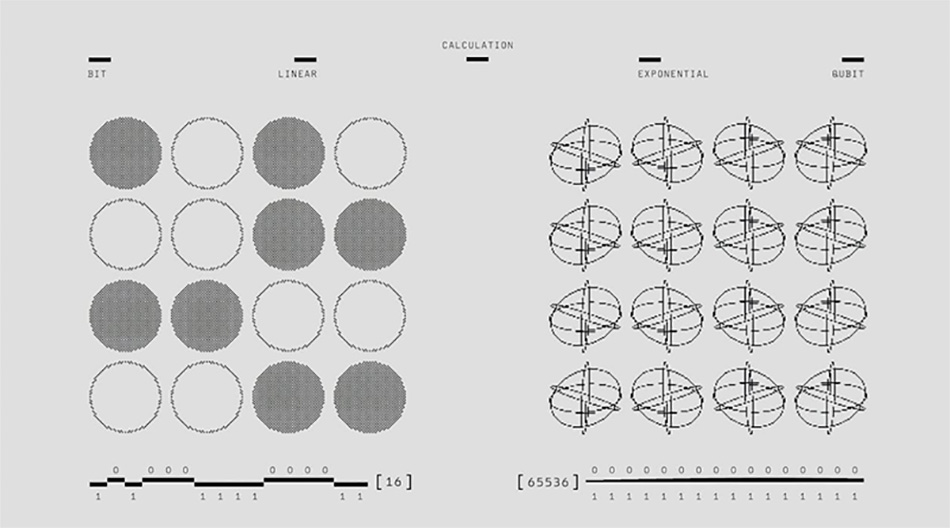

Pop explanations claim quantum computers win by doing “many computations at once.” That slogan sells. It doesn’t build systems. Modern machine learning often chokes on storage and movement, not multiplication. Earlier proposals leaned on quantum RAM that would store a full dataset as a superposition state ready for processing. Skeptics didn’t need to sneer. They could just count the required hardware and control precision. The new work targets ingestion directly. It asks how reviews, RNA reads, or detector hits can enter a quantum processor while preserving a real quantum benefit, without demanding a dedicated memory bank of absurd size. Memory dictates what gets trained and what gets thrown away.

Streaming beats hoarding

The key move borrows from ordinary computing. Systems stream. Nobody downloads an entire film before pressing play. The analysis argues that quantum data loading can work the same way. Instead of building and storing the full data superposition up front, the computer receives data in chunks, prepares what it needs, and proceeds. No giant preloaded archive. No all-at-once memory requirement. This isn’t a cute metaphor. It changes scaling. If a quantum machine can process more data at a smaller memory cost than any classical machine can match, the advantage becomes structural. The machine doesn’t need to own the dataset. It needs to digest it while the stream keeps coming.

Logical qubits are the only ones that count

Raw qubit counts function like bragging about instruments in an orchestra that can’t stay in tune. The relevant unit is the logical qubit, stabilized through error correction so it behaves reliably. The analysis makes a provocative comparison. Around 300 logical qubits could, in principle, beat any classical computer even if that classical computer had physically ridiculous resources. The point isn’t cosmic bragging rights. The point is that memory costs can explode in a way classical hardware can’t tame. Around 60 logical qubits might arrive by the end of the decade if engineering progress holds. That won’t replace mainstream AI training. It could still give an edge for certain data-heavy tasks by attacking memory pressure.

Dequantisation remains the skeptic’s weapon

Quantum machine learning carries a scar. Many earlier algorithms looked impressive, then classical researchers dequantised them, keeping performance while dropping the quantum hardware. That history forces a blunt demand. Any new method must show that the quantum ingredient matters, not just clever bookkeeping that classical randomized methods can copy. The streaming approach now faces that test. Target domains make sense. Large scientific instruments produce torrents of data and discard most of it because memory can’t keep up. Particle physics, genomics, astronomy. A system that learns from streams without storing the entire flood fits that world. It won’t replace most GPU workloads.

The shift here doesn’t come from a flashy new model. It comes from dropping the demand that all data must sit inside a quantum memory palace before learning begins. Treat the dataset as a stream. Accept batching. Design around memory as the primary constraint. That posture turns the old debate into a sharper test. Can quantum machines learn from large, real-world streams with less memory than classical machines require, and can they do it in a way that classical dequantisation can’t cheaply imitate? If the answer holds even for a narrow slice of tasks, the implications travel.