Just Like Humans, Robots Can Be Made to Learn through Instructional Videos

You are mistaken if you think robots can be made to learn things or know the procedures in doing tasks only through programming or by downloading instructions into their operating systems. Even robots can be taught to do things by letting them watch how-to videos just like the robot Chappie in the eponymous South African film released earlier this year, only less intellectually capable and unsupervised.

RoboWatch

Researchers at Cornell University have been teaching robots to perform tasks by letting them watch videos that state instructions and demonstrate the task. Under the project RoboWatch, the researchers are trying to discover the underlying structure in how-to videos, without labelling or supervision, by viewing an extensive collection of tutorial or instructional videos. These videos, according to the researchers, come with their respective starting points, ending, and specific objective steps that that make up a structure that can be comprehensible to a robot or the artificial intelligence installed in a machine.

RoboWatch is a project that aims to develop the future of “personal robots.” With the algorithms the researchers are working on, they hope to establish the base technology that will lead to the creation of personal robots that may even try to learn things on their own by turning on the DVD player or browsing the Internet to view tutorial videos. These robots may also be used to do various household chores including dishwashing, cooking, washing the laundry, feeding the pets, and even assisting seniors or household members who have disabilities.

What Makes RoboWatch Different?

You are likely wondering why would there be a need for such a technology when robots can simply be programmed or taught the things they need to know and do through their programming. RoboWatch, however, is different in putting emphasis on just letting a robot learn without the intervention of a human. It’s basically an artificial intelligence development project that focuses on machine learning through instructional videos.

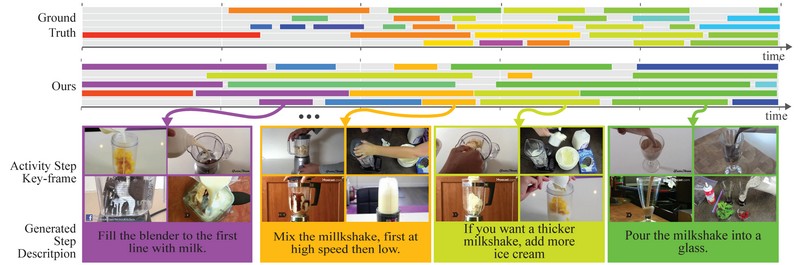

At the heart of RoboWatch is the development of an algorithm that enables machine learning by organizing and digesting the information contained in a multitude of instructional videos. It is capable of doing unsupervised parsing of videos to pick out the important parts and group similar parts in a storyline that makes more sense to an artificial intelligence system. As illustrated on the project’s official website, videos for the keywords “how to make an omelette,” can be parsed according to the illustration below:

The algorithm discovers activities within the videos and parses them to create a more organized storyline wherein similar details are grouped together. The segments are then color coded with their key frames visualized and captions are automatically produced to point out the important information. The goal is to reduce hundreds or even thousands of instructional videos into simplified step-by-step directions or instructions presented in natural language.

In the film “Chappie,” the robot character was guided by humans to learn different things, from identifying certain objects to establishing rules and doing tasks like using a gun and punching people. RoboWatch is different as the robot is supposed to handle everything on its own, as long as the robot is only dealing with videos that provide instructions on the same specific task or activity.

Meticulous Process

With the multitude of online videos that can fit into the keywords being used to do a query, it’s only to be expected that RoboWatch will encounter many videos that are unrelated or irrelevant to the topic being searched. To get rid of “outliers” or those videos that come with the search results but are not useful or relevant, the algorithm analyzes all of the videos individually to find frequently recurring objects and also analyzes the narrations or sounds that come with the videos. By doing these steps, videos such as those of appliance commercials or scenes from movies or TV shows, which are obviously unrelated or useless for the parsing process, are omitted.

Availability

Unfortunately, this technology is not expected to become available for mass use in the near future. The good news, though, is that all the knowledge compiled by all the testing conducted with RoboWatch are stored in an online knowledge base called RoboBrain. RoboBrain serves as an online resource that can be tapped on by robots anywhere to learn various useful tasks or skills.