Highly-Dexterous and Tactile Human-Like Robot Hand Can Work in the Dark

Humans often rely on their sense of touch to execute tasks without taking their eyes off what they do. It is a task that is difficult to mimic using robots. But according to their published statement, Columbia Engineering researchers developed a robotic hand that replicates the tactile skills of humans. With their robot hand, they could manipulate objects without depending on vision. The robotic hand can function in difficult lighting conditions or dark environments. The researchers combined advanced touch sensing with machine learning and motor learning algorithms.

Achieving dexterity

Many research groups have attempted to provide more dexterity in robotic hands, but the process is difficult. For example, in many robotic hands, the developers used suction cups and robot grippers to pick and place objects gently. However, humans still do the tasks requiring more agility, such as reorientation, insertion, and assembly.

But advances in sensing technology and methods in machine learning that can analyze collected data improved the transformations in the manipulations of robots.

Novel design

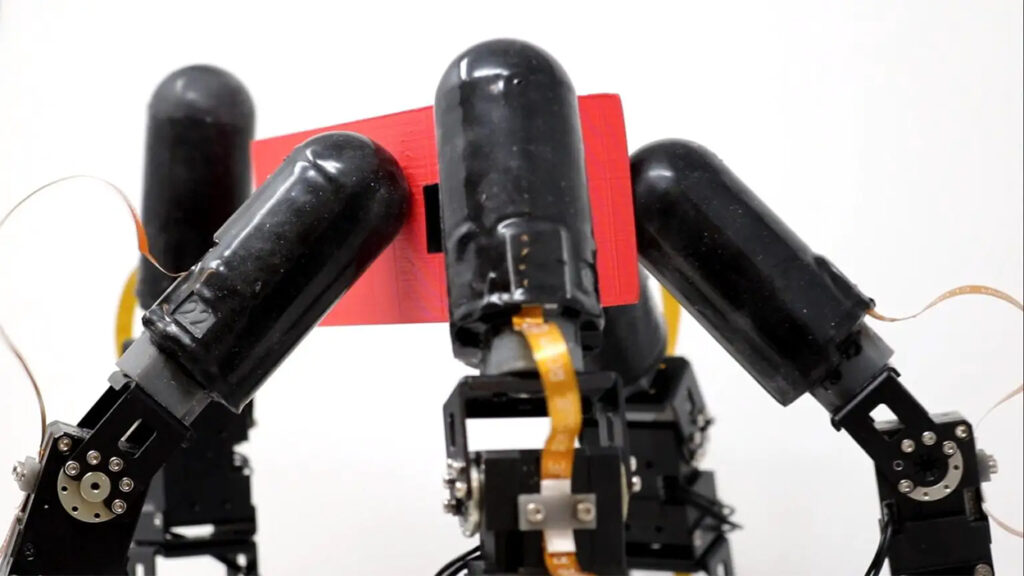

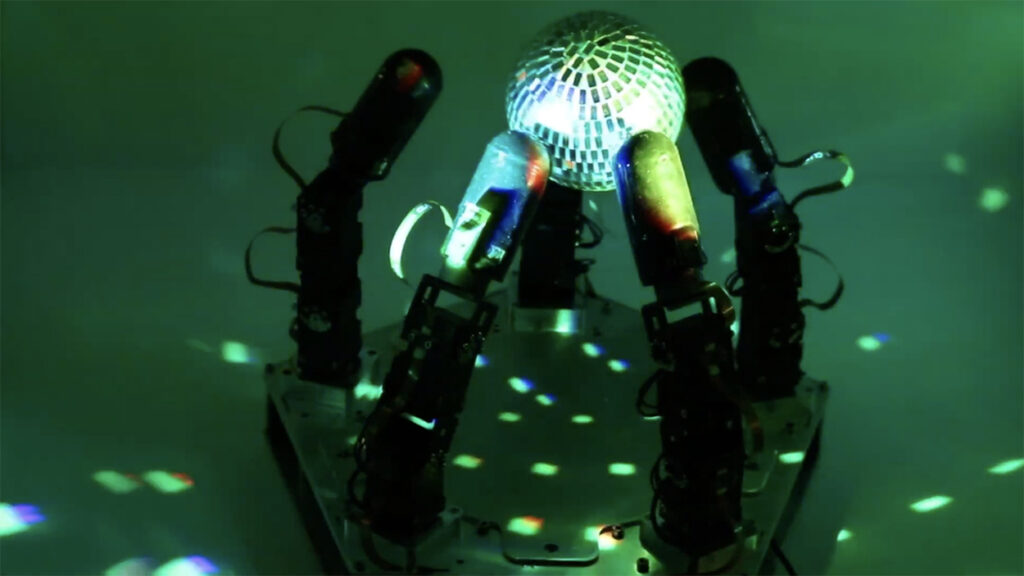

The research engineers designed and built a robotic hand with five touch-sensitive fingers. Each digit has 15 independently activated joints. The engineers used new methods of motor learning to teach the robot hand to do complex manipulation tasks through practice. The sense of touch of the fingers is very strong. It allows the hand to do physical tasks immune to optical problems like complete darkness, occlusion, or dim lighting. Instead, it relied on proprioception, which includes abilities like self-movement, force, and physical positionality.

They collected the data from the proprioception and fed it into a deep reinforcement learning program, which helped the researchers simulate about one year of practice time into only a few hours. The team made it possible by using highly parallel processors and modern physics simulators.

Task Demo

To demonstrate the skill of their robot hand, the engineers used a difficult manipulation task—executing a large rotation of an object that the robot fingers unevenly gripped. The objective is to show that the hand can manipulate and keep the item in a secure and stable grip to prevent the object from dropping. The demonstration was successful. Moreover, the engineers could show that the hand performed the task without visual feedback, displaying the robot hand’s power to work in the dark.

Future applications

The research team believes that the level of dexterity the robot hand showed will lead to many applications for robotic applications, including material handling and logistics, which causes supply chain problems in the U.S. They also see applications in assembly lines in factories and advanced manufacturing.

One of the applications the researchers are looking at is assistive robotics in the home, where the dexterity of the hands would be needed.

End goal

The Columbia engineers’ goal s to combine embodied intelligence with abstract intelligence. They hope robots with physical abilities can take virtual semantic intelligence into physical chores in the real world and the homes. They are also thinking about adding visual feedback, and together with the tactile sense, the robot hand can develop more dexterity. Farther down the road, they want to work on replicating the human hand.