Beyond Facial Recognition: New Tech ‘Can Read’ How Long You’ll Likely Live

No, this is not going to be a biology or health related post. This is about a new technology that supposedly reveals how long a person can live by scanning the face. This is about the idea of using facial recognition technology to find out or estimate the aging of people. It seeks to explore the possibility of using the face in determining how long a person is expected to live.

A new research headed by Jay Olshansky, a biodemographer at the University of Illinois at Chicago, is expected to attract the interest of insurance companies. This is because it may soon be possible to come up with “more accurate” premiums for for every individual applicant of health or life insurance plans. By using facial recognition or scanning technology, insurance companies may significantly reduce the undesirable (for the insurance companies) instances of having to deal with claims that come too soon.

The Base Idea

This new technology has been conceptualized based on the observation that those who live longer usually look younger. A study about “young-looking people who live longer” some five years ago can support this idea. In 2009, Danish scientists found that people who look young for their age tend to live longer compared to those appear to age typically and compared to those who seem to look older than how they actually are.

The Danish study observed 387 pairs of twins to come up with their conclusions. They also hypothesized that DNA components called telomeres could be responsible for the youthfulness of a person’s look. Telomeres are associated with the ability of the cells to replicate and can be indicative of the proneness of a person to certain diseases. People who have shorter telomeres tend to age faster while those who have longer telomeres look younger for their age.

The Life Span Reading Face Reading Technology

Jay Olshansky worked with Karl Ricanek, a computer science professor at the University of North Carolina at Wilmington who also worked on facial recognition tech at three US government agencies. Together, they developed a program that makes use of advanced and sophisticated algorithms to analyze photos of faces. They then launched a website, called Face My Age, that can test and further improve the effectiveness of the program they created.

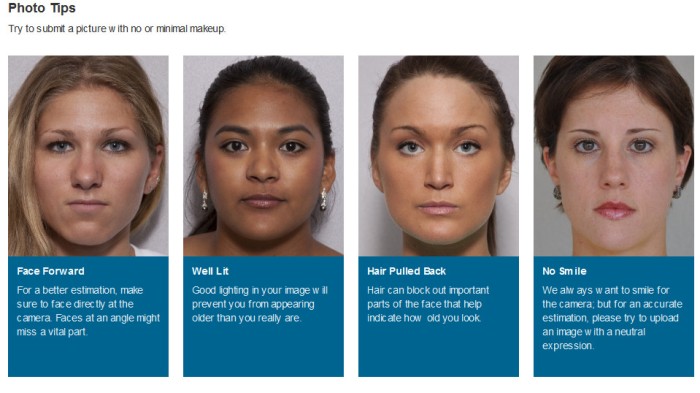

The website can guess a person’s “face age” by analyzing a photo of a person together with details of the person’s age (in the picture), gender, ethnicity, and birth date. The photo to be submitted should not have makeup applied. Good lighting is also prescribed to avoid looking older. It should be a frontal shot without the hair or other items covering the face. Also, the person should not be smiling and should carry a neutral expression. Of course, those who have had plastic surgery to look younger cannot expect to get an honest “face age” estimate from the website and are discouraged in sending their photos.

Face My Age is not intended to guess a person’s age. Rather, it aims to tell a person how fast he or she is aging. Also, the site aims to accumulate more information about aging among people with different racial backgrounds, age, and gender. Hopefully, more people submit their photos and details to the site to make it more “accurate” in evaluating “face ages.”

Personalized Face Age Reading Approach

According to Ricanek, the technology they created is different from the current approach in face aging. This is because “the lines they (current approach) paint on your face are actually the same as the lines they paint on my face.” Ricanek claims that their system has the advantage of being individualized as it pools in actual data from more people with different backgrounds.

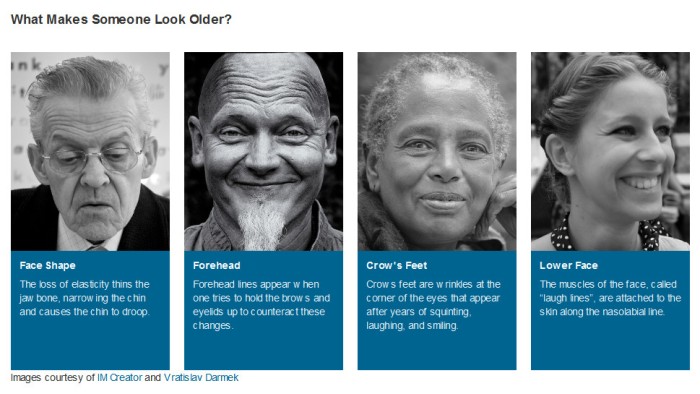

Olshansky, on the other hand, says that their system, once refined, should be able to assign different ages for the different parts of the face. For now, the system only generates one age number, and that is for the overall facial appearance.

Work in Progress

Face My Age, at the moment, does not guarantee highly accurate face age readings. However, as more photos are submitted over time (the developers hope to get more than 20,000), the system is expected to become more “accurate.”

This new technology is expected to become useful to those in the insurance industry. It’s not just a matter of aesthetic concern. With its aging and lifespan evaluation potential, it can provide guidance to insurers in setting premiums to charge to their customers. It certainly is a good idea that puts to good use the advances in facial recognition tech similar to what the accuracy boost achieved by Facebook.